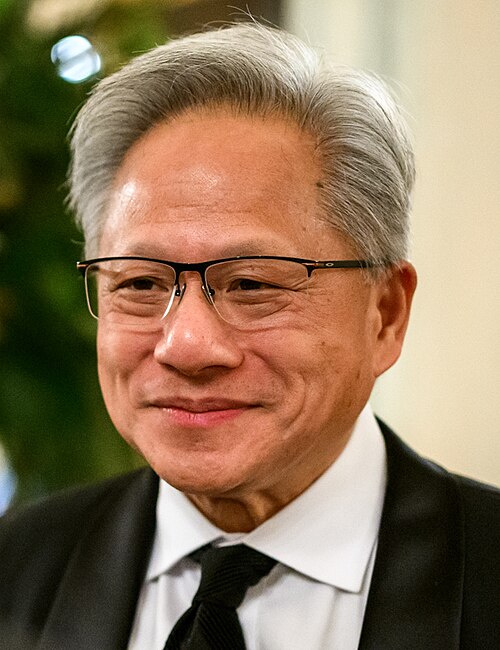

NVIDIA, the first company to reach a $5 trillion valuation, is hosting a conference right now in San Jose, California. It’s called NVIDIA’s GTC, and its founder and CEO Jensen Huang just gave the keynote at the Shark Tank. Huang said that 450 companies are exhibiting and NVIDIA is showcasing 129 robots. This story outlines a few things he mentioned.

I understood the trillions of dollars valuation after hearing from its founder that their customers and partners represent one third of all AI compute power globally. That’s a lot!

As an aside, he’s skilled at giving numbers that amaze the listener. He’s constantly saying things like, “a million times” or “a trillion dollars” or “10,000 fold.” Some of those items are referred to here.

Here are 13 points from his talk as well as and IBM and Samsung booth photos.

- There’s an enormous need for more compute power and along with this, a stunning increase in the intelligence of AI. NVIDIA’s website says that when it comes to AI model training, the sheer magnitude of compute power required is staggering. NVIDIA tools keep all of this going.

- The computing demand of work has gone up 10,000 times. I researched this statement further and it looks like that means since 2022 so over the past four years.

- It’s the 20th anniversary of NVIDIA Cuda. It’s been introduced into every single industry ecosystem. Later he clarified saying “almost every” industry. Cuda was announced on the back of GeForce, the greatest marketing campaign launched 25 years ago. They took Cuda to every computer system.

- Today NVIDIA announces the fusion of 3D graphics and AI. Huang mentioned IBM as a user of the new solution. Today IBM and NVIDIA are using NVIDIA Cuda for Watson X. Nestle is using the IBM tool.

- Data is the ground truth that gives AI meaning. Tokens are the new commodity. Inference is your workload and tokens are your new commodity.

- Structured data is the foundation of trustworthy AI. Azure, Databricks, Google Cloud, others feed into this.

- ChatGPT represents the generative AI era. It has profoundly changed how computing is done. Huang said he used ChatGPT this morning.

- Inference inflection was mentioned several times as a key message. Inference drives your revenues. The faster you can inference the more you can do. The better your inference, the smarter your AI.

- NVIDIA is an algorithm company. They work with Google Cloud and AWS. He also name-dropped Oracle, Microsoft Azure, OpenAI, and Anthropic and OpenAI a few times. I counted three Anthropic mentions in hour one.

- NVIDIA the first vertically integrated but accelerated computing company. NVIDIA is horizontally open.

- Industries he mentioned included autonomous vehicles, algorithmic trading which is going through a “deep learning moment,” AI biology for drug discovery, physical AI robotic systems. He mentioned quantum, media and gaming, among others.

- Robotics is a 50 trillion dollar industry. He’s said this before.

- The inflection point of inference has arrived. Every time it computes it has to reason.

If you work in tech or want to, I recommend you listen to his whole keynote. It’s very technical and contains a lot of new product news.

My key takeaway is that the founder of NVIDIA has a lot of trust in AI right now and it’s fueling the need for more compute power. His company is at the forefront of supplying the tools to try to satiate this hunger. Although Huang spoke about industry trends it was mostly an NVIDIA advertisement for new and newish products. This is fine because I believe that’s what attendees wanted to hear.

###

Michelle McIntyre is a Silicon Valley-based tech public relations consultant known for her expertise in AI and robotics. She’s an IBM vet and a ranked future of work influencer with 3.5 million Quora views for her advice on elite college admissions. DM her on LinkedIn or Facebook to get in touch. IBM and Samsung booth photos were taken by David McIntyre.